Introduction

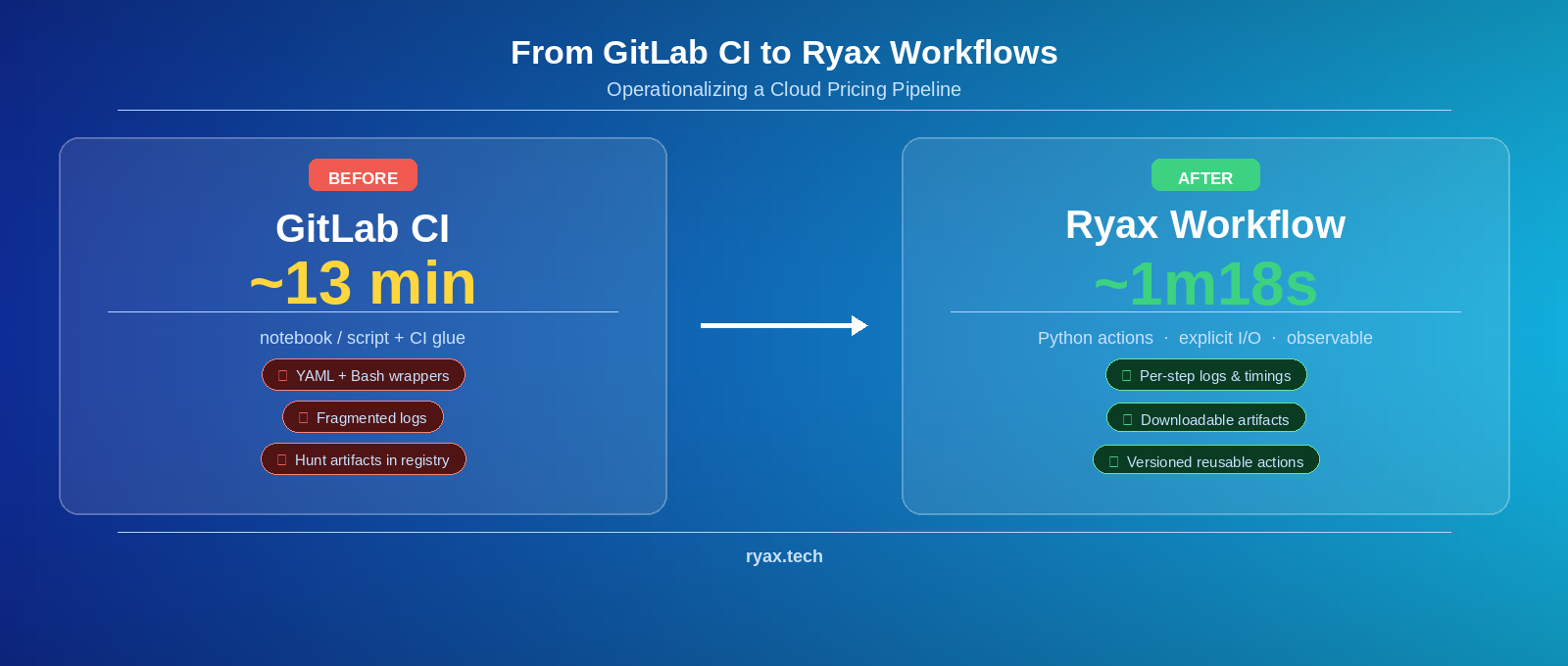

We recently migrated a scheduled cloud pricing pipeline from GitLab CI to a Ryax workflow. The goal stayed the same: refresh cloud provider catalogs, build a consolidated CSV snapshot, derive pricing artifacts for on‑prem cost estimation, and publish stable outputs for downstream systems (including our scheduler).

This post focuses on the data science / ML engineering perspective: how we went from “a working notebook/script + CI glue” to a workflow made of reusable, versioned Python actions with explicit inputs/outputs, visible logs, and downloadable artifacts.

All screenshots are redacted for public sharing (no personal identifiers).

Summary

-

We refresh cloud catalogs (AWS + Scaleway in the reference run), snapshot a consolidated CSV, and derive two JSON artifacts used to estimate on‑prem resource costs.

-

In GitLab CI, orchestration lived in YAML + Bash wrapper scripts around Python; debugging and artifact access were fragmented across jobs and external registries.

-

In Ryax, the pipeline becomes a workflow graph of Python actions with explicit I/O, per‑step logs + timings, and artifacts available directly in the UI (and published to stable S3 keys).

-

Reference timings: GitLab end‑to‑end runs were ~13 minutes; the Ryax snapshot node ran in ~1m18 (21,387 rows) with per‑node timing and logs visible.

Why we need pricing artifacts (not just raw catalogs)

Cloud instance pricing is usually a lookup: match an instance_type in a provider catalog and read a price in USD/hour.

On‑prem deployments (Kubernetes or Slurm) are different. We still need to reason about “cost” to support multi‑objective scheduling (e.g., cost vs performance vs availability), but there is no public “on‑prem price list”. Instead, we estimate equivalent costs by fitting simple models on cloud catalogs.

In practice, our pipeline produces three outputs:

-

A consolidated CSV snapshot of provider pricing rows (our “source artifact”).

-

instance_prices.json: per‑instance price records derived from the CSV (provider/region/instance → USD/hour).

-

resource_prices.json: per‑resource unit price estimates (CPU, RAM, GPU) used to estimate on‑prem resources.

Each output embeds an as_of timestamp (YYYYMMDDTHHMMSSZ) so downstream consumers can trace exactly which snapshot was used.

Here is a short excerpt of the resource pricing artifact (redacted for brevity):

Today we expose the median (p50). We can extend this to additional percentiles (p10/p90) if scheduling policies need uncertainty/risk bounds.

“Before”: GitLab CI works — but orchestration becomes glue

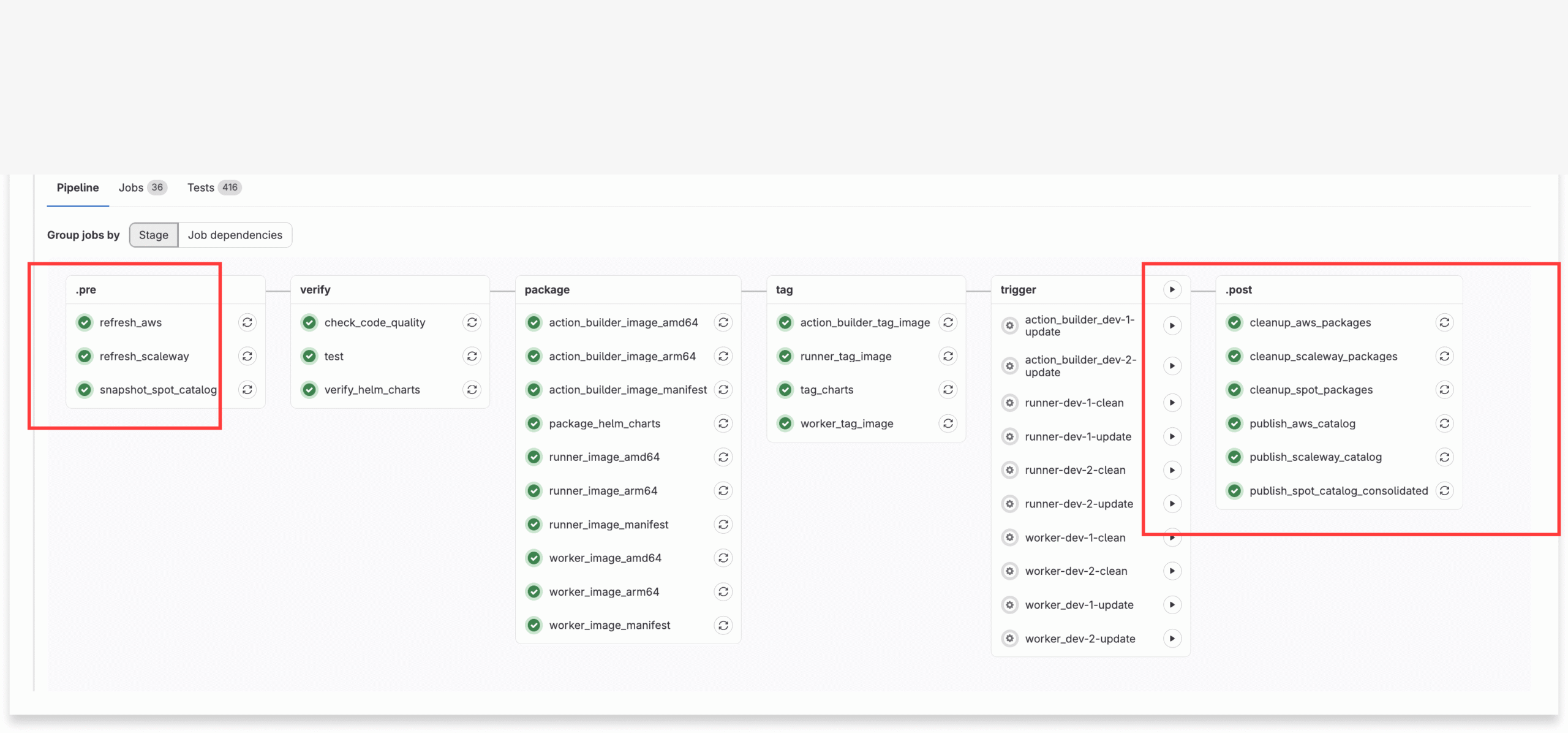

Our original implementation lived in GitLab CI. The pipeline was scheduled (cron), ran multiple jobs (refresh → snapshot → publish → cleanup), and published artifacts to external systems (package registry + stable URLs).

This approach is valid and common. But over time, the orchestration layer tends to grow:

-

YAML job graph + environment wiring

-

Bash wrapper scripts to install tooling, set variables, move files, and handle publishing details

-

Python code that does the actual data retrieval and transformations

Debugging becomes “follow the trail”: open job A logs, then job B logs, then figure out where an artifact ended up and whether it was the version you expected.

“After”: a Ryax workflow made of notebook-friendly actions

In Ryax, we rebuilt the same pipeline as a workflow graph of Python actions. Each action:

-

declares its inputs/outputs in ryax_metadata.yaml

-

writes artifacts under deterministic paths in /tmp

-

emits structured logs

-

can validate boundary conditions (e.g., “no empty snapshot”, “as_of must match”)

This feels close to how many of us prototype: we develop transformations in a notebook or a script, then “package” the stable steps into reusable units. The difference is that the units are operational by construction: versioned, typed at the edges, and observable.

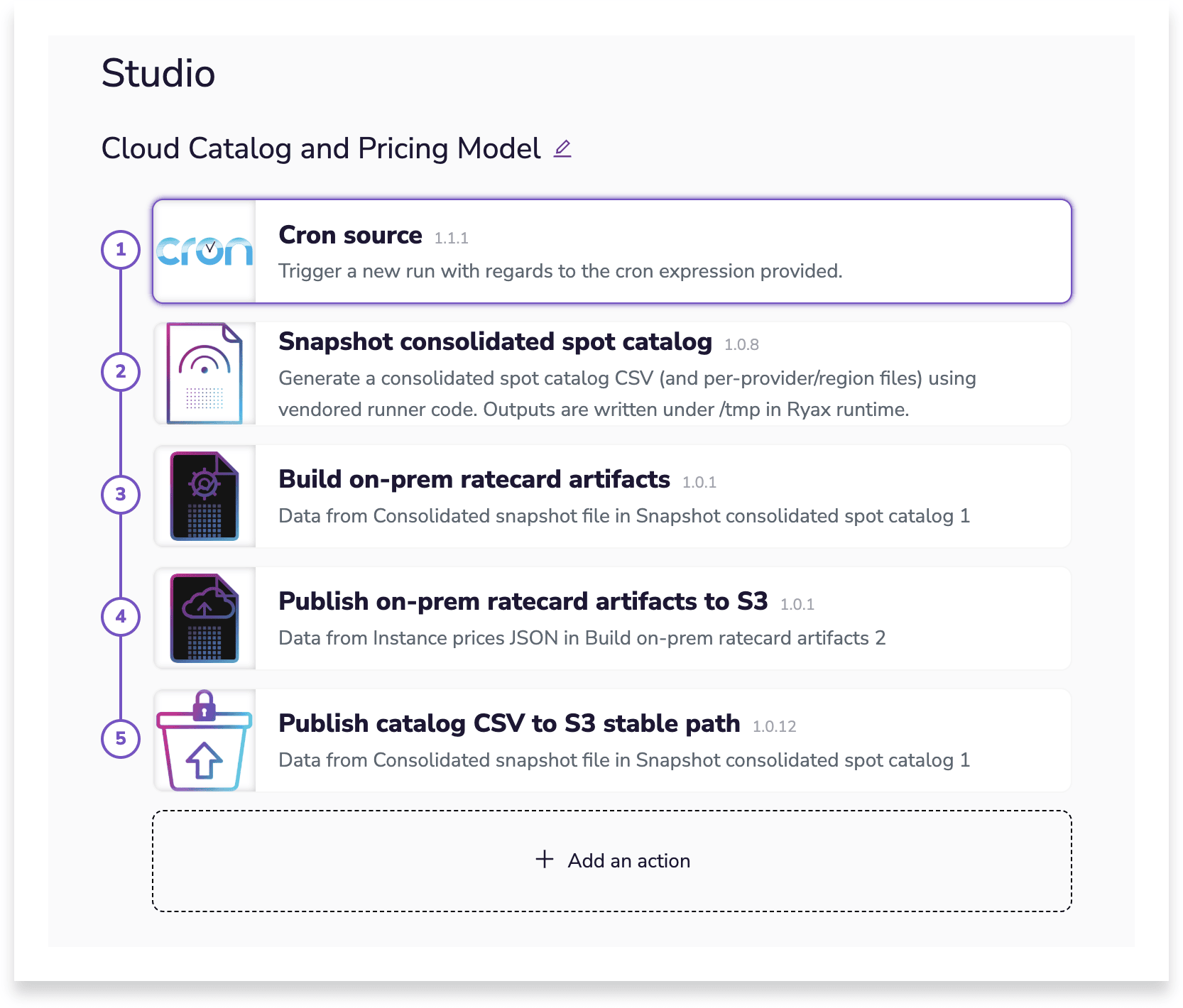

The workflow in four actions

Here is the minimal chain we run:

-

catalog_spot_snapshot (v1.0.8) — refresh catalogs + build the consolidated CSV snapshot

Output: consolidated CSV + summary (as_of, row count, status).

-

catalog_onprem_ratecard_build (v1.0.1) — derive pricing artifacts from the snapshot

Output: instance_prices.json and resource_prices.json (both embed the same as_of).

-

catalog_onprem_ratecard_s3_publish (v1.0.1) — publish JSON artifacts to stable S3 keys

Invariant: validates both JSONs share the same as_of before publishing.

-

catalog_s3_publish_stable (v1.0.12) — publish the CSV snapshot to a stable S3 key (“latest”)

Invariant: runs only after JSON publish succeeds (gate semantics).

The full interface (including optional parameters) lives in each action’s ryax_metadata.yaml.

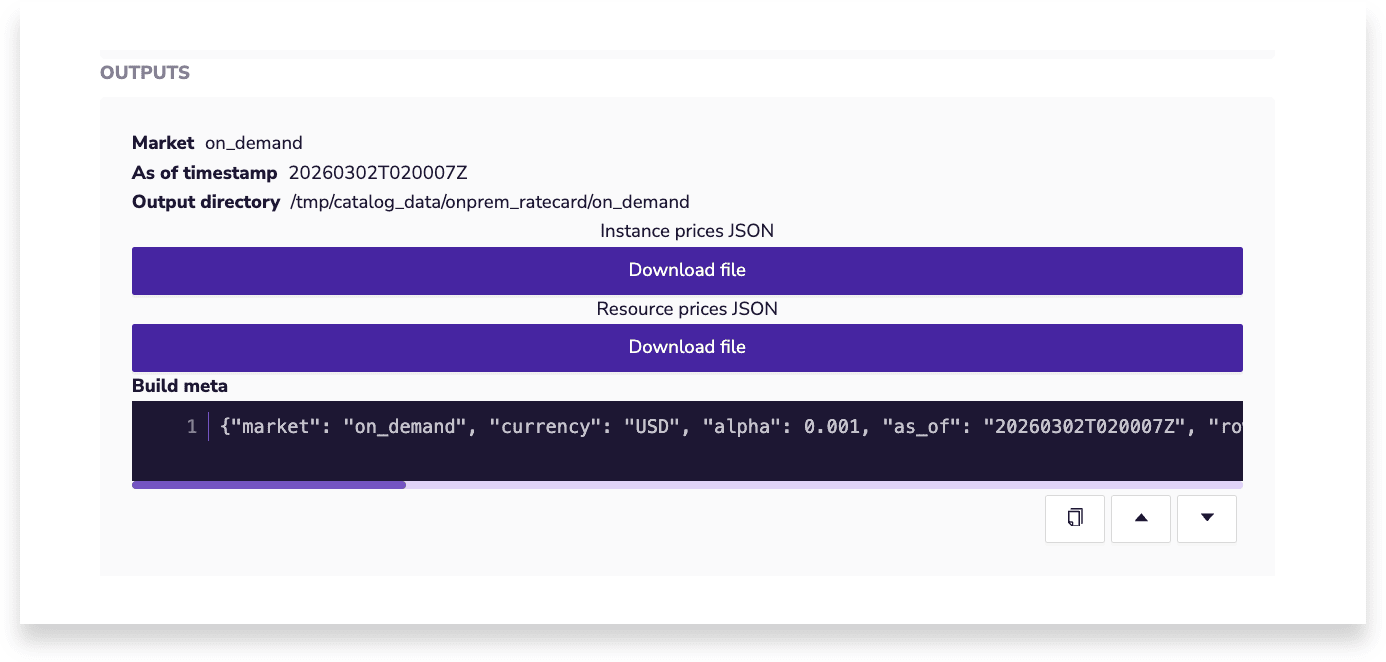

Artifacts become first-class (downloadable in the UI)

One practical difference for iteration: artifacts are directly downloadable from the run UI. If a downstream consumer looks “off”, we can inspect and download the exact CSV/JSON that fed the model, without hunting for the right registry object or S3 key.

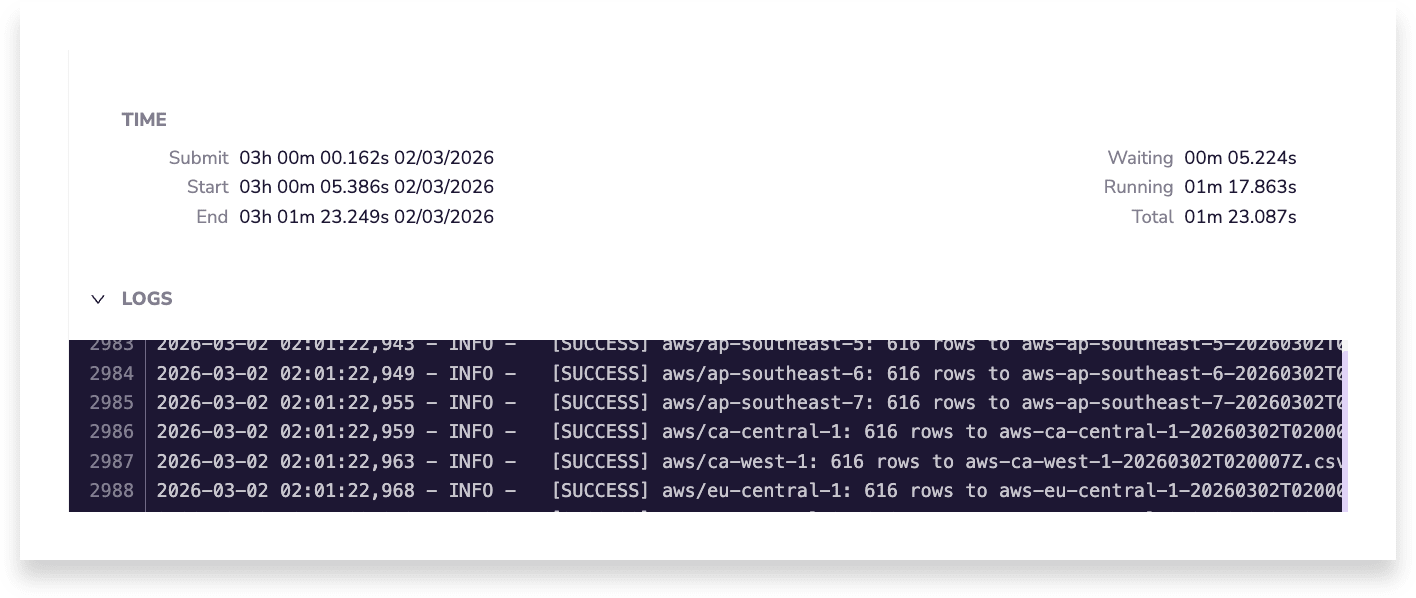

Observability: per-step time + logs in one place

For data pipelines, time and logs are part of the product. Ryax exposes them per action node, which turns debugging into “inspect the node that produced the artifact.”

In our reference run (AWS + Scaleway), the consolidated snapshot contained 21,387 rows and the snapshot node took:

-

waiting: 5.224s

-

running: 1m17.863s

-

total: 1m23.087s

A small performance story: batch fetching to reduce throttling pressure

Catalog refresh is often I/O bound. A naive “many small calls” implementation can be slow and prone to rate limits.

We improved this by batching and using page-based retrieval where available (instead of repeatedly querying small fragments). The goal was to reduce call overhead and make runtime more predictable as regions/providers scale.

As directional evidence (not a strict apples-to-apples benchmark): in a legacy “refresh providers” step we observed ~9m05s running time, while the integrated snapshot action in the new workflow ran in ~1m18s for the reference run described above.

Takeaways for data teams

-

Treat artifacts as products. If you can download and inspect them, you can debug faster and build trust in downstream consumers.

-

Make invariants explicit. Gates, as_of consistency checks, and “reject empty data” rules prevent silent corruption.

-

Prefer reusable steps over orchestration glue. Keep Python where the data logic lives; keep orchestration declarative and observable.

-

Pin runtimes to reduce drift. If a workflow ran last month, it should still run the same way next month.

What’s next

-

Add distribution detail (p10/p90) to resource_prices.json if we need uncertainty-aware scheduling.

-

Capture and publish per-node timings for build/publish steps to complete the end-to-end performance picture.

-

Expand provider/region coverage while keeping action boundaries strict and testable.