Offload your data analytics workload to HPC

From Cloud/Kubernetes to HPC/Slurm, and back

Seamlessly

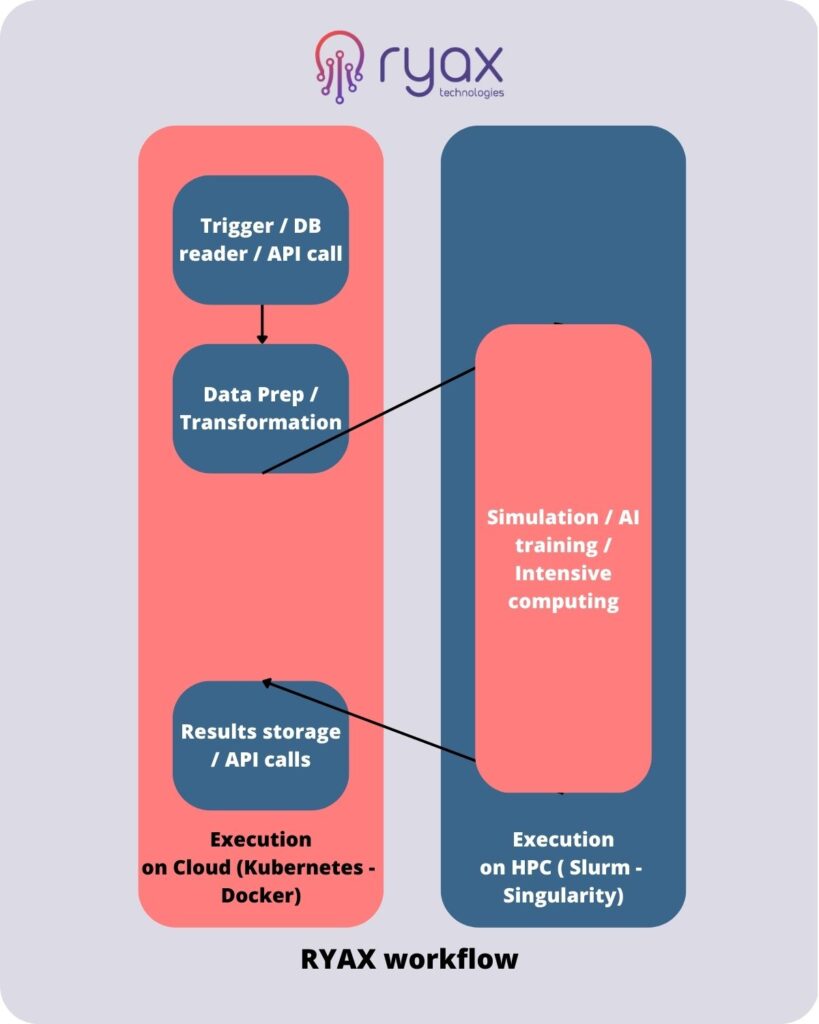

To facilitate the life of developers needing to leverage HPC platforms for their data analytics workflows we have enhanced RYAX with offloading capabilities from Cloud to HPC in a seamless way.

How it works ?

User provides the code of its data analytics workflows with the dependencies - such as Big Data framework (Spark,etc), AI library (Pytorch, etc) - and RYAX handles automatically the packaging in both Singularity for the execution on HPC-based systems and Docker for the execution on Cloud-based systems.

Then, during the deployment, the user can decide which of the workflows actions should be executed on HPC.

RYAX provides an integration with Slurm resource manager and Singularity which enables the offloading of the action to the HPC system through SSH protocol and adapted stage-in / stage-out mechanisms for the proper transfer of data from Cloud to HPC and vice-versa.

HPC can be of great benefit to Big Data/AI applications since large datasets can be processed in timely manner. But the steep learning curve of HPC systems software and parallel programming techniques along with the rigid environment deployment and resource management remain an important obstacle towards the usage of HPC for Big Data analytics.

In addition, usage of classic Cloud and Big Data/AI tools for containerization and orchestration cannot be applied directly on the HPC systems because of security and performance drawbacks. Hence workflows mixing HPC and Big Data/AI executions cannot be yet combined intelligently using off-the-shelf software.

Ryax is a low-code platform that enables the creation of workflow-based Cloud applications. It enables the design and deployment of data analytics workflows using various supported Big Data frameworks and AI libraries.

TRY IT FREE

RYAX works with ATOS HPC cluster (France), IDRIS HPC cluster (France), LRZ HPC cluster (Germany), Cineca HPC cluster (Italy), PSNC HPC cluster (Poland), HLRS (Germany), BSC (Spain)