Introduction

This second part of the case study builds on the infrastructure and cost-optimization strategies described in Part 1 (read here), and focuses on the retrieval-augmented generation (RAG) pipeline that powers automated KPI extraction from financial reports.

Technical implementation

Core components

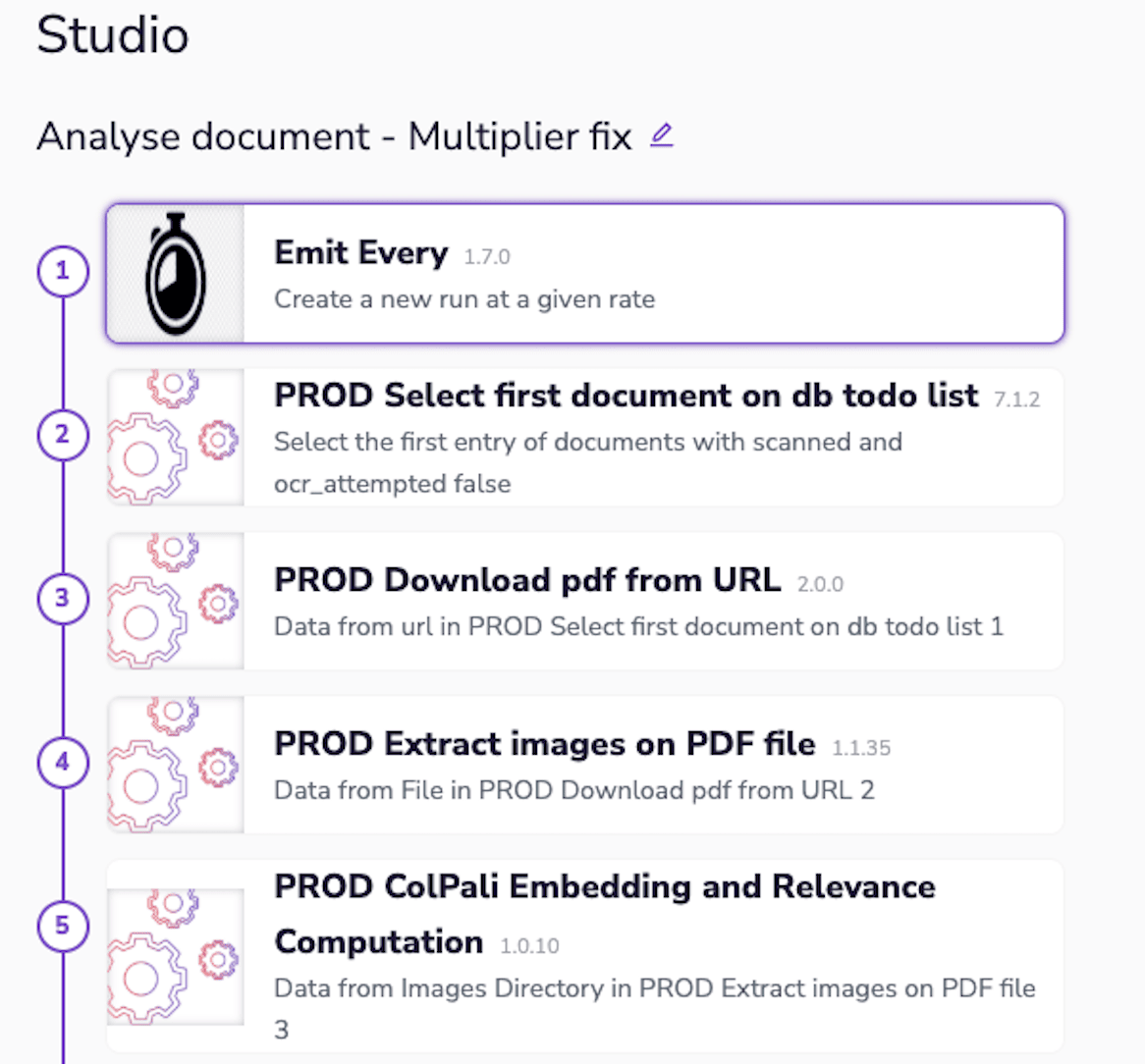

Our KPI extraction pipeline is built on three fundamental components, each implemented as modular actions within the Ryax platform for maximum flexibility and maintainability.

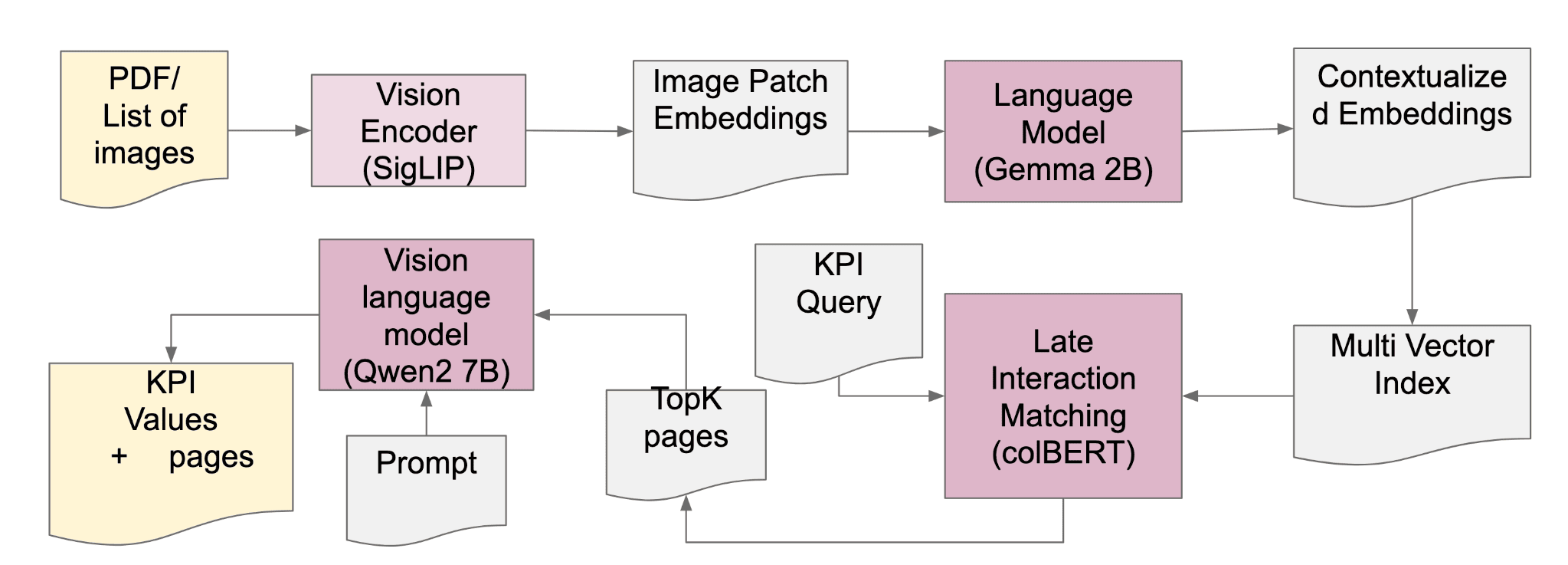

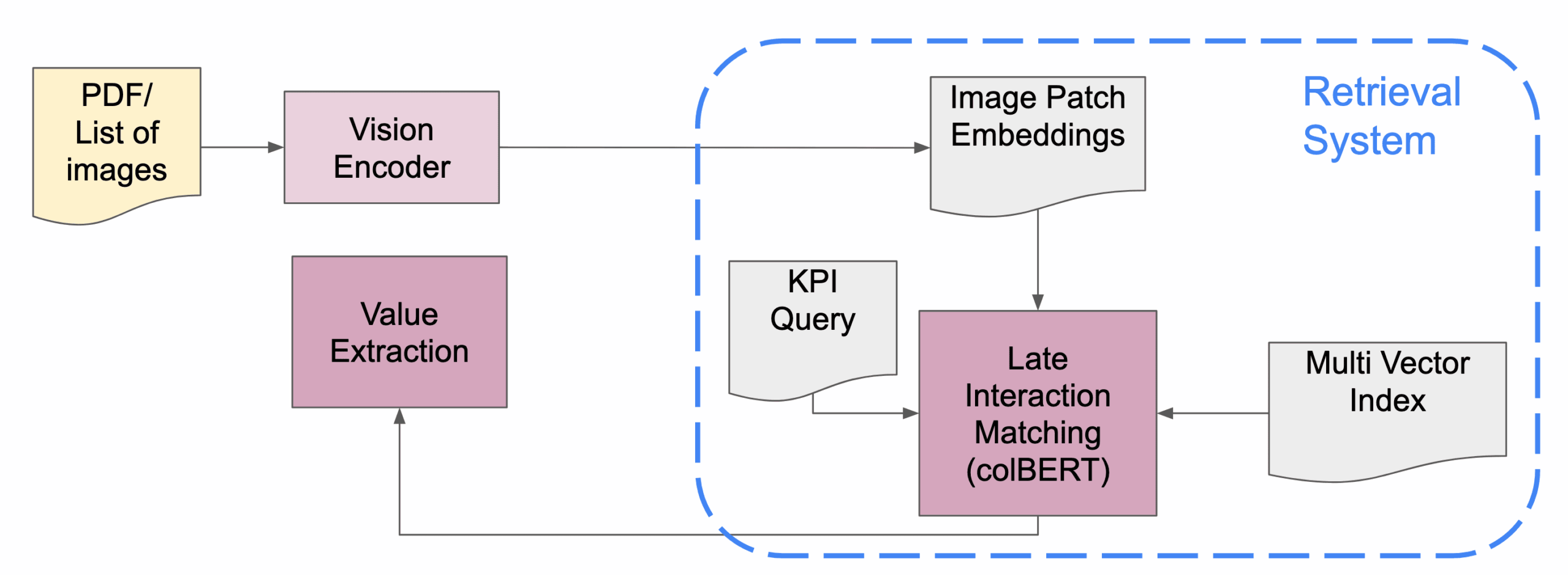

Our system architecture integrates vision-language modeling with efficient information retrieval, orchestrated through Ryax's workflow engine.

Integrating Vision Language Models (VLMs) like ColPali into document processing workflows enhances the recognition of financial table values. ColPali directly embeds document images, capturing both textual and visual elements without complex preprocessing. It employs a late interaction mechanism, comparing query tokens with document image patches to improve retrieval accuracy. This approach streamlines the extraction of financial data from documents, improving efficiency and accuracy.

1. Document processing

The document processing stage handles the critical first step of converting financial reports into a standardized format for analysis. Ryax's workflow engine orchestrates parallel PDF processing while managing memory constraints effectively.

Key features:

- Automatic PDF to image conversion with configurable DPI settings

- Memory-efficient parallel processing through chunking (50-page chunks)

- Support for both digital and scanned documents through adaptive preprocessing

2. Information retrieval system

The cornerstone of our KPI extraction pipeline is a sophisticated information retrieval system that combines visual and semantic understanding. Through Ryax's workflow engine, we implement a multi-stage retrieval process that achieves performance comparable to BEIR benchmark standards. It lies the ColPali embedding model, which processes document pages through carefully controlled batching:

# Efficient embedding generation with memory management top_images = resize_images(original_images, factor=factor) embeddings = get_colpali_embeddings(model, processor, top_images)

This adaptive approach enables processing of high-resolution financial documents while managing GPU memory constraints effectively. The embeddings capture both visual layout and textual content, crucial for understanding financial tables and statements.

We implement colBERT-style late interaction matching, allowing for:

- Fine-grained similarity computation between queries and documents

- Maintenance of contextual information throughout the matching process

- Efficient handling of both structured and unstructured document regions

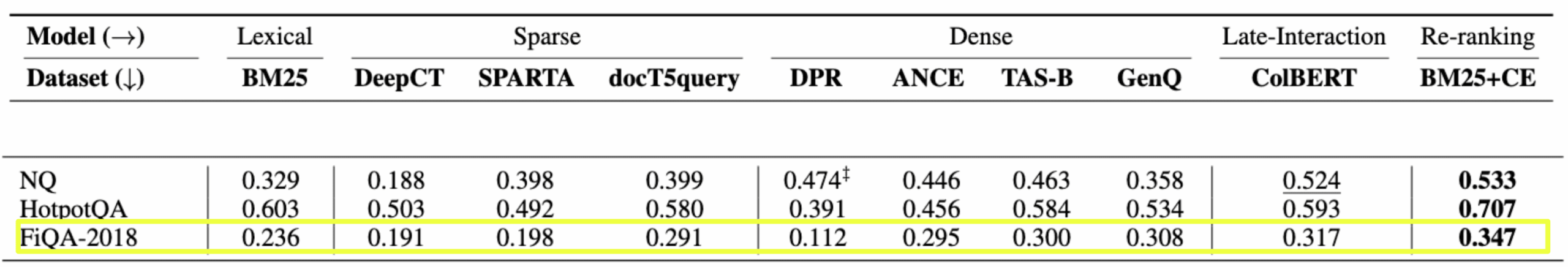

Our implementation achieves performance metrics competitive with specialized financial QA systems:

-

nDCG[1]@10 scores comparable (significantly above) to FiQA-2018 benchmarks

-

40% accuracy maintained on scanned documents

-

Resilient performance across various document formats

The system employs a multi-vector indexing strategy that balances retrieval accuracy with computational efficiency:

-

Document pages are represented by multiple embedding vectors

-

Contextual information is preserved through regional embeddings

-

Efficient similarity search implementation for rapid KPI location

The nDCG (normalized Discounted Cumulative Gain) measures ranking quality by evaluating how well retrieved results are ordered. It is computed as the ratio of DCG (which gives higher weight to relevant items at the top) to IDCG (the ideal ranking's DCG). nDCG@k evaluates ranking for the top k results, e.g., nDCG@5 for the top 5 and nDCG@10for the top 10, rewarding systems that prioritize relevance in earlier results. This metric is widely used in RAG systems to assess the quality of retrieved context documents for query-driven tasks.

The diagram illustrates how Ryax's workflow engine orchestrates these components, managing GPU resources and data flow between stages. The platform's built-in monitoring capabilities allow tracking of embedding quality and retrieval performance across different document types.

Performance optimizations include:

- Dynamic batch sizing based on available GPU memory

- Automated fallback to CPU processing for memory-intensive documents

- Caching of intermediate embeddings for frequently accessed documents

This retrieval system forms the bridge between raw document processing and precise KPI extraction, maintaining consistent performance across both digital and scanned reports. Through Ryax's infrastructure, we achieve reliable scaling while managing computational resources efficiently.

3. Value extraction

The final stage of our automated pipeline leverages advanced vision-language modeling for precise KPI extraction. Implementation through Ryax's workflow engine enables efficient model deployment and resource management.

-

Qwen2-VL model integration for visual-text understanding:

Our system uses the Qwen2-VL 7B-parameter model, deployed as a containerized component within Ryax's infrastructure. The model processes identified relevant pages through carefully crafted prompts:

prompt = f"""Analyze ALL provided images to: 1. Identify the table containing values related to: {term_list} 2. Extract the SINGLE most relevant value for year {year} Format: "VALUE|UNIT" (e.g., "2,801|£'000s")"""

This structured approach enables consistent extraction across various document formats while maintaining contextual understanding.

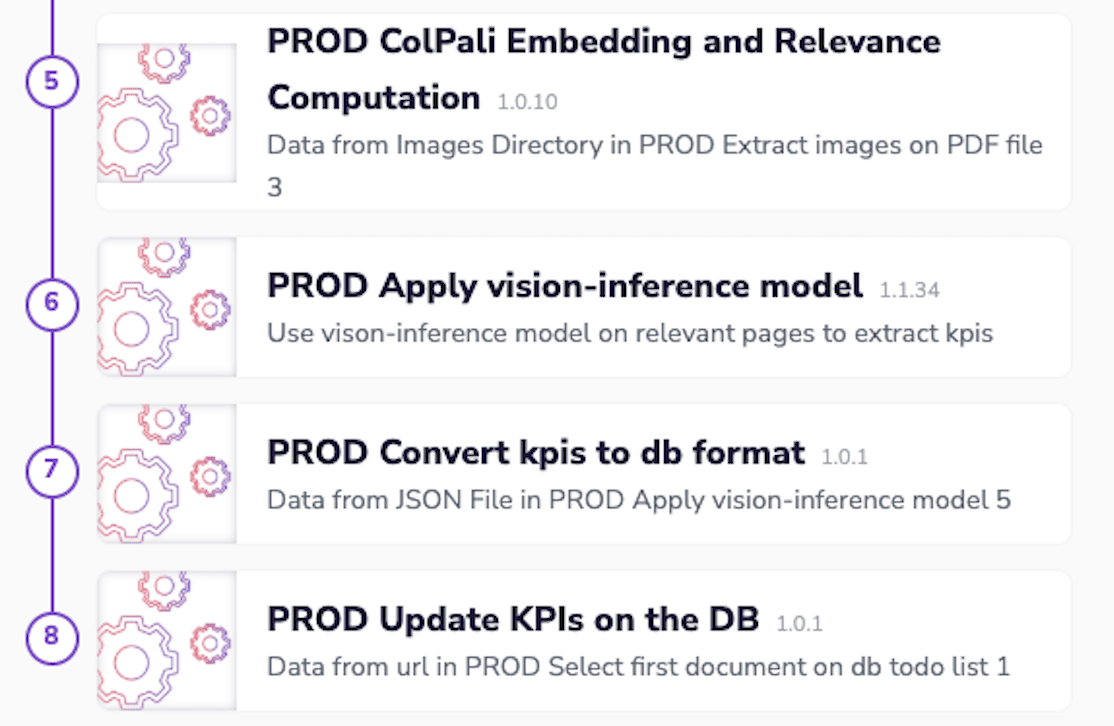

Workflow architecture

1. Input processing

Document ingestion and preprocessing is handled through a series of Ryax actions:

- PDF downloading and validation

- Parallel image extraction (configurable DPI and batch size)

- Memory-efficient document chunking

The workflow interface demonstrates the sequential processing stages with built-in monitoring and error handling.

2. KPI location

The system employs a multi-stage approach for precise KPI identification, the page selection process is as follow:

- Initial embedding generation through ColPali

- Relevance scoring using late interaction matching

- Top-K page selection (configurable, typically K=3)

Performance metrics show consistent accuracy across different document types:

- Clean PDFs: ~50% accuracy

- Scanned documents: ~40% accuracy

- Resilient performance with varying document quality

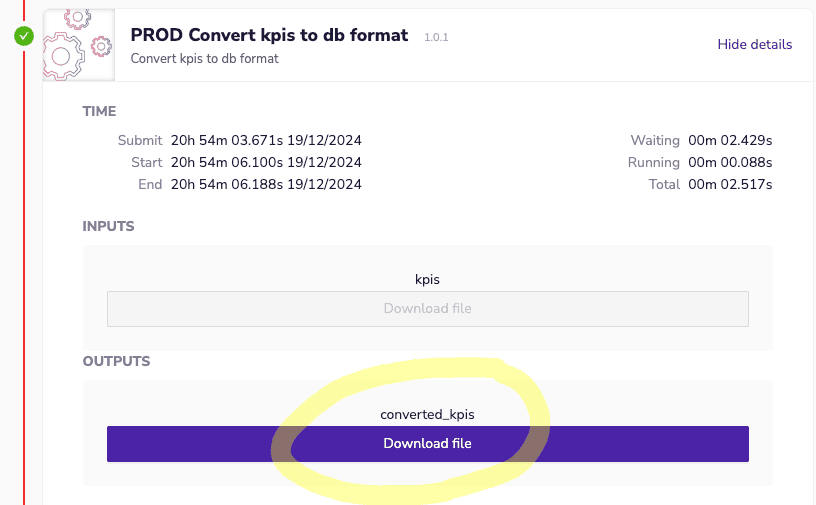

3. Data extraction

The extraction process combines vision-language understanding with structured data parsing:

- Dynamic prompt engineering based on target KPIs

- Automated unit normalization and standardization

- JSON output formatting for database integration

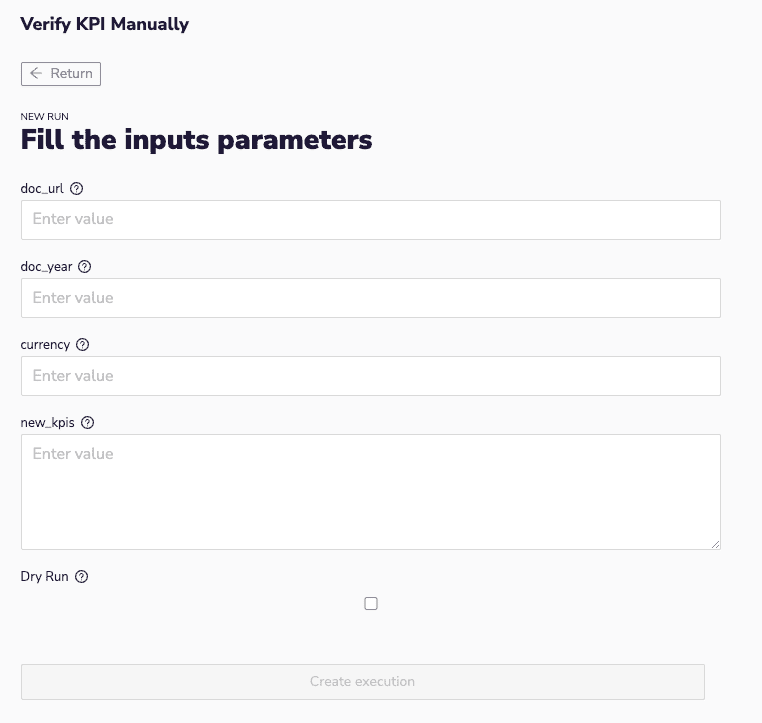

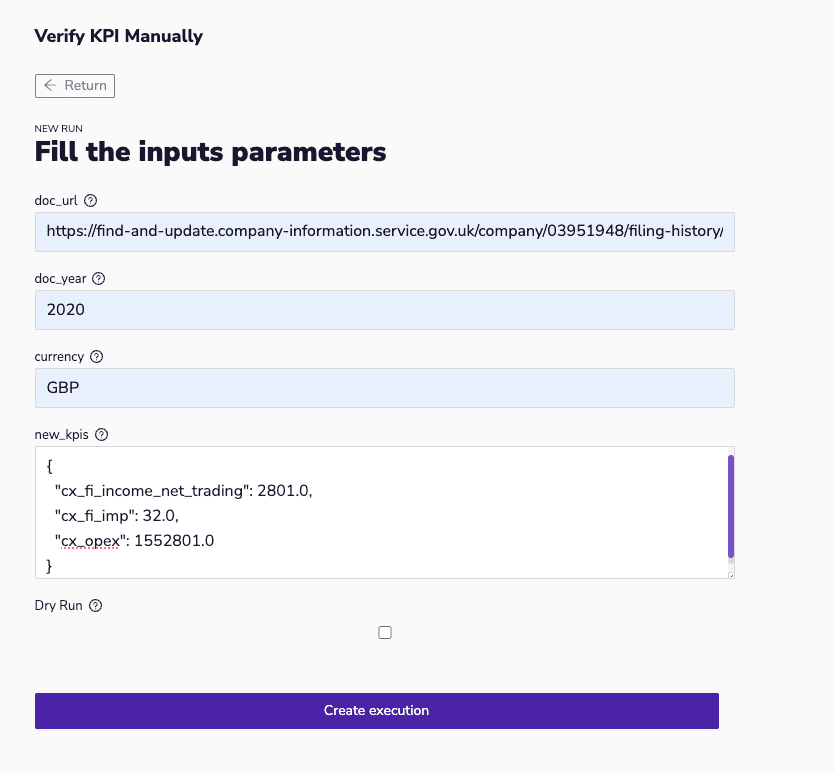

4. Manual validation workflow

The manual verification workflow provides a critical human-in-the-loop validation process to ensure accuracy of extracted KPIs while maintaining the efficiency of automated processing. This hybrid approach combines the speed of automated extraction with the precision of human verification.

The verification interface provides a comprehensive form for KPI validation, showing:

- Document URL for traceability

- Year of financial data

- Currency specification

- JSON format KPI values for review

- Dry run option for safe validation

This interface serves as the main entry point for human validators to review and adjust extracted KPIs before committing them to the database.

The verification process follows a structured approach:

Initial review:

-

- Retrieve JSON output from automated extraction workflow

- Access to automatically identified relevant pages

- Review initial KPI extraction results

Validation steps:

- Compare values against source documents

- Adjust numerical values if needed

{ "cx_fi_income_net_trading": 2801.0, "cx_fi_imp": 32.0, "cx_opex": 1552801.0 }

- Verify currency and units

- Option to perform dry run validation

The verification workflow serves as a crucial complement to the automated extraction process, ensuring data accuracy while maintaining operational efficiency. The interface design and implementation focus on user experience while maintaining robust data validation and security measures.

Current performance

Information retrieval from financial documents, particularly tables, remains a significant challenge in the field. Our implementation, built on Ryax's workflow engine, demonstrates competitive performance while addressing practical deployment concerns.

Benchmark context

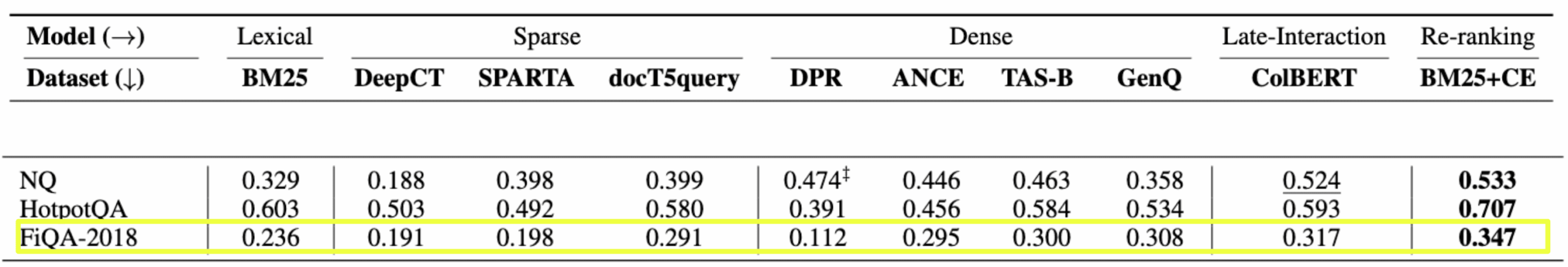

This comprehensive benchmark comparison presents nDCG scores across different model architectures, from simple lexical approaches to advanced late-interaction models. Of particular interest is the FiQA-2018 row, highlighting the challenging nature of financial document processing. The ColBERT architecture, which we adapted for our implementation, achieves a score of 0.317, with only the re-ranking approach (BM25+CE) performing marginally better at 0.347. This validates our architectural choices while highlighting the inherent complexity of financial information extraction.

The complexity of financial document processing can be better understood by examining the characteristics of various document understanding tasks.

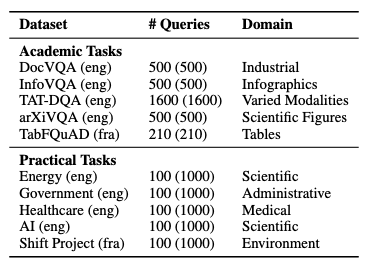

While financial document processing shares characteristics with Industrial (DocVQA) and Table (TabFQuAD) tasks, it presents unique challenges due to the structured yet variable nature of financial reports. The query volumes (500-1600 per dataset) provide context for evaluation robustness.

Domain-specific performance

Our system demonstrates robust performance across different document types:

- Clean digital documents

-

- 50% average accuracy for KPI extraction

- Consistent performance across different financial statement layouts

- Reliable handling of various unit formats (thousands, millions)

- Scanned documents

-

- 40% accuracy maintained despite OCR challenges

- Resilient to common scanning artifacts

- Effective handling of table structure variations

Our system's performance must be considered in the context of different document processing approaches, from basic text extraction to advanced captioning.

Technical optimizations

Through Ryax's workflow engine, we implement several critical optimizations:

Our system architecture integrates vision-language modeling with efficient information retrieval, orchestrated through Ryax's workflow engine.

The Qwen2 7B vision-language model processes queries and documents, while the retrieval system manages efficient page selection. The TopK pages component enables focused processing of relevant content, optimizing both accuracy and resource usage. This architecture, implemented through Ryax's workflow engine, ensures efficient resource utilization while maintaining processing accuracy.

Resource management

- Dynamic GPU allocation for vision-language models

- Automated memory optimization through image resizing (1.0x to 4.0x)

- Efficient batch processing with failure recovery

Processing pipeline

- Parallel CPU operations for document preprocessing

- Smart chunking for large document handling

- Automated sanity checks ensuring result consistency

Current Limitations

- Current bottleneck in lexical embeddings:

- Lexical gap in financial terminology

- Context preservation in table strucutres

- Tradeoffs beween embedding quality and compute resources

- Memory constraints for large documents:

- Memory requirements for high-resolution documents

- GPU utilization and optimization for large batches

- Processing speed vs accuracy tradeoffs:

- Complex table layouts impact performance

- unit conversion reliability

- Multipage context maintenance

These limitations inform our ongoing development roadmap, with Ryax's modular architecture enabling incremental improvements without disrupting production workflows.

Our measured performance demonstrates that practical, production-ready financial document processing is achievable while maintaining competitive accuracy. The system's ability to handle both clean and scanned documents with consistent performance makes it particularly valuable for real-world applications.